What is Compute Systems in Cloud and Virtualization Concepts?

Compute Systems

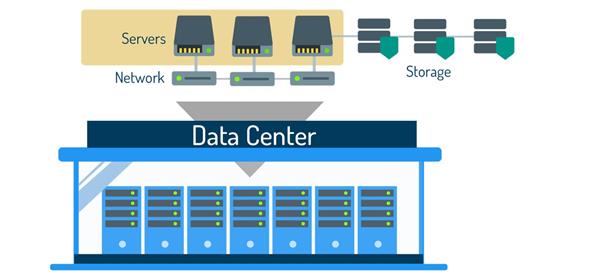

As covered in Section 1.2 Hardware and Software, a computer is comprised of hardware and operating system software (OS) that runs applications. A computing system essentially means a computer; however, data centers use specific computers called servers, and so throughout this course, the term computing system will often be used to refer to a server. What differentiates a server from a computer, such as a laptop, is that the laptop computer is designed to be more user-friendly than a server, but both include physical components such as a processor, memory, and internal storage.

A personal computer tends to come with more input/output devices such as a mouse, keyboard, and monitor so a user can interact directly with the system while servers do not usually include components for direct user interaction. Of course, a computing system, even a data center server, must have logical components such as an OS and other forms of system software.

Outside of a data center, computing systems can be large or small, depending on a user’s computing needs. For example, a gamer will probably use a large gaming laptop or PC tower because they need computing speed and space for good graphics, but that would be overkill for someone just looking up a recipe to make dinner. They would be fine with using a tablet or phone. However, in a data center, a computing system needs to be large enough to provide the amount of processing power needed to store and process data for a large number of users at one time such as for businesses, companies, and government agencies. That is why data centers use servers. This is because unlike desktop computers, which focus their processing power on user-friendly features like a desktop screen and media, servers are designed to use most of their processing power to host services and to interact with other machines. This makes servers more efficient and better equipped to deal with higher workloads.

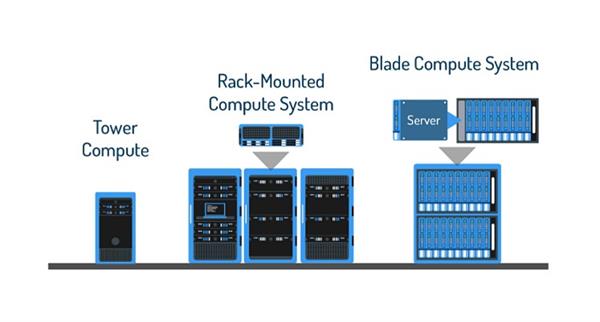

Datacenter servers fall under three types, tower, rack-mounted, and blade. The tower system is a traditional PC tower. These are also commonly used in computer labs and offices so more than likely, you’ve used a tower system like a personal computer, but not as a server.

The rack-mounted system is a thin, large rectangular compute system that slides onto the racks of a frame. When the frame is full of rack-mounted systems, it resembles a tall metal set of drawers. A typical metal frame used for this type of system is called a 19-inch rack, based on its width. The height of the frame is measured by slots available for rack-mounted servers, called rack units. For example, a normal 19-inch rack is 42u, which means it holds up to 42 rack-mounted servers. The 42u is taller than the average person, but smaller frames are available, such as 1u and 8u, which take up less space.

Lastly, the blade server, like the rack-mounted, has rectangular hardware inserted into a larger frame. However, these are usually inserted vertically into the frame, which would look like a set of drawers on its side. The adoption of smaller form-factor blade servers is growing dramatically. Since the transition to blade architectures is generally driven by a desire to consolidate physical IT resources, virtualization is an ideal complement for blade servers because it delivers benefits such as resource optimization, operational efficiency, and rapid provisioning.

Brief History of the x86 Architecture

In addition to knowing server types, it is important to know that data centers typically use servers with an x86 architecture. Architecture means the type of processor used by the server. The x86 architecture has roots that reach back to something called 8‐bit processors, built by Intel in the late 1970s. As manufacturing capabilities improved and software demands increased, Intel extended the 8‐bit architecture to 16 bits. In 1985, Intel extended the architecture to 32 bits. Intel calls this architecture IA‐32, but the vendor‐neutral term x86 is also common.

Over the following two decades, the basic 32‐bit architecture remained the same, although successive generations of CPUs added many new features, including support for large physical memories. In 2003, the company AMD introduced a 64‐bit extension to the x86 architecture, initially dubbed AMD64. Later in 2004, Intel announced its own 64‐bit architectural extension calling it IA‐32e and later also EM64T. This course will use the vendor‐neutral term x64 to refer to the 64‐bit extensions to the x86 architecture.

Understanding the difference between the 32-bit and 64-bit is important because virtualization technology is compatible with 64-bit and not 32-bit. The reason for this is that the 64-bit processor has advantages that the 32-bit does not, such as the ability to add more physical memory to it, which will come in handy when that space is needed to process virtual machines, as well as better overall performance when running programs. Although 32-bit is still used in some cases, 64-bit is more likely to have virtualization capability and is, therefore, more widely used. In fact, more than likely, you are currently using an x64 platform as most modern computers run on the x64 CPU platform.

Consider This

Another benefit of the x64 (64-bit CPUs) is that they are backward compatible with x86, aka 32-bit.

Clustering

In a data center, a number of similarly configured servers can be grouped together with connections to the same network and storage to provide an aggregate set of resources in the virtual environment, called a cluster. When a server is added to a cluster, the server’s resources become part of the cluster's resources. So, the cluster manages the resources of all servers within it, and it now acts as a single entity. This is beneficial because if one of the servers fails, another one of the servers can take its place.